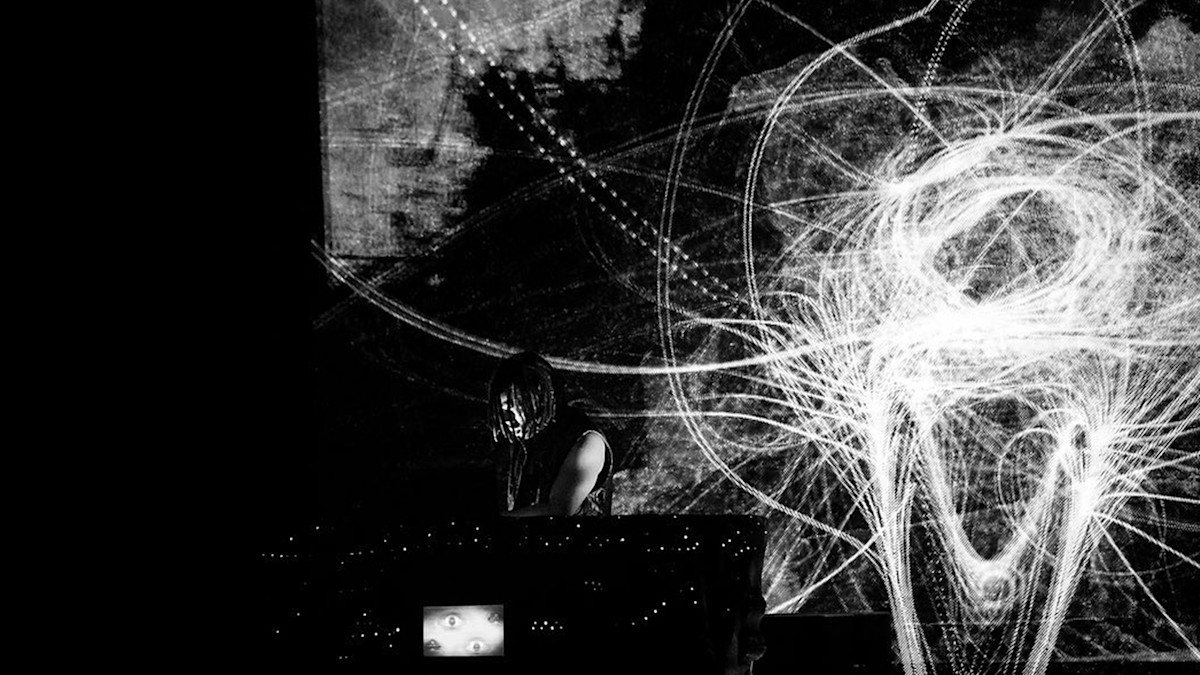

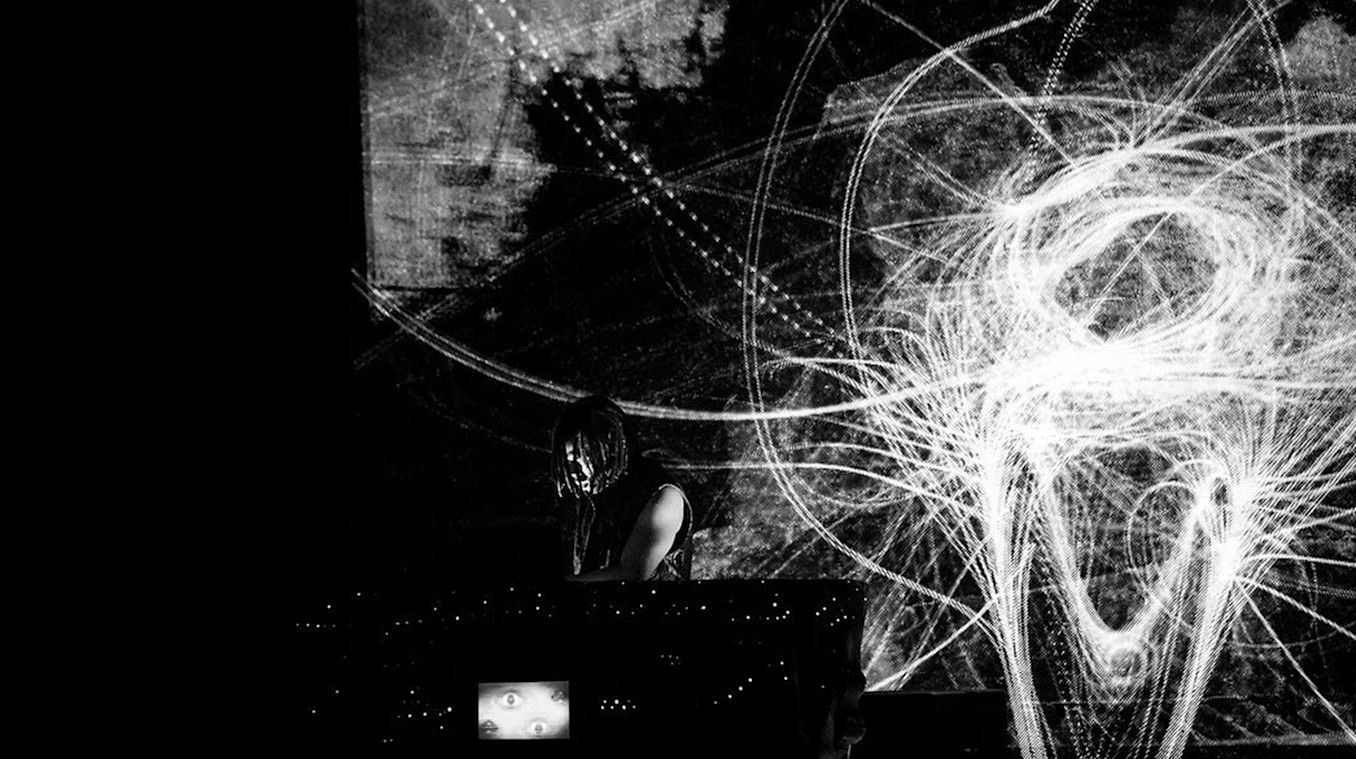

The show is a crazy mash-up of delectably obscene aesthetics, all brought together by Strangeloop Studios, to create a window to another world.

Creative Director and Founder of Strangeloop Studios, David Wexler, shares his design story:

“In the case of the FlyLo show, it’s an evolving journey, over a decade in the making. The show itself has a long history, which has involved many collaborators and animators over the years. The new Flamagra record has informed additions to the show influenced by Lotus’s own cinematic voyages and the album artwork of Winston Hacking”

“For this run, we were initially looking at creating some midi-synching with instrumentation that Lotus would be playing on stage, allowing a keyboard, for instance, to invoke some of the 3D visuals for the show. We never know what Lotus will play, though we do develop routines for different track shows over time. We also wanted to incorporate camera feeds within 3D space that we could manipulate and bring into our scenes, and Notch made that possible. Our camera feeds don’t simply end up on a 2D screen, they become stereoscopic 3D surfaces that emit particles, and pulse and deform in 3D space.”